ScyllaDB powers real-time AI with low latency, high throughput, and billion-scale data.

Learn More- Products

- Developers

- Customers

- Resources

- Pricing

- Contact Us

- Chat Now

- Get Started

- Sign In

- Search

- Button Links

ScyllaDB powers real-time AI with low latency, high throughput, and billion-scale data.

Learn More

ScyllaDB is purpose-built for data-intensive apps that require high throughput & predictable low latency.

Learn More

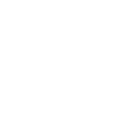

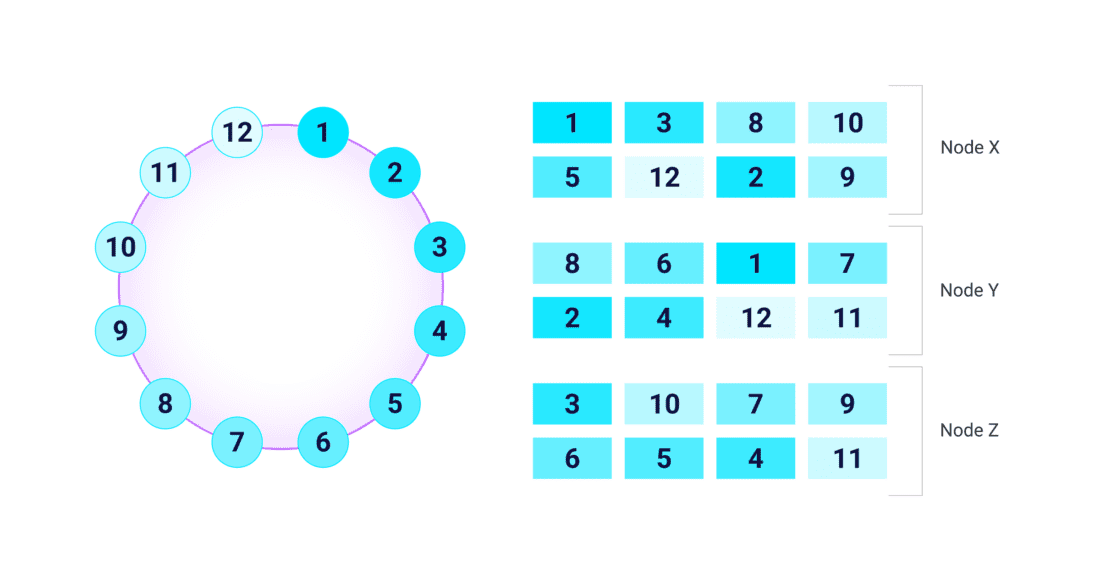

ScyllaDB is a distributed database written in C++ and designed to fully exploit modern cloud infrastructure. It utilizes a shard-per-core architecture, meaning each CPU core has dedicated resources that independently handle data for maximum efficiency. Asynchronous communication and a focus on low-level optimizations minimize latency. Data is distributed across multiple nodes as a virtual ring (each node is further partitioned as virtual nodes prior to ScyllaDB 5.4 but transitioning to tablets with ScyllaDB 6.0, a more granular, flexible, higher-performance approach), ensuring fault tolerance with no single point of failure.

| Cluster | The first layer to be understood is a cluster, a collection of interconnected nodes organized into a virtual ring architecture across which data is distributed. All nodes are considered equal, in a peer-to-peer sense. Without a defined leader the cluster has no single point of failure. Clusters need not reside in a single location. Data can be distributed across a geographically dispersed cluster using multi-datacenter replication. More on cluster creation and management can be found here. |

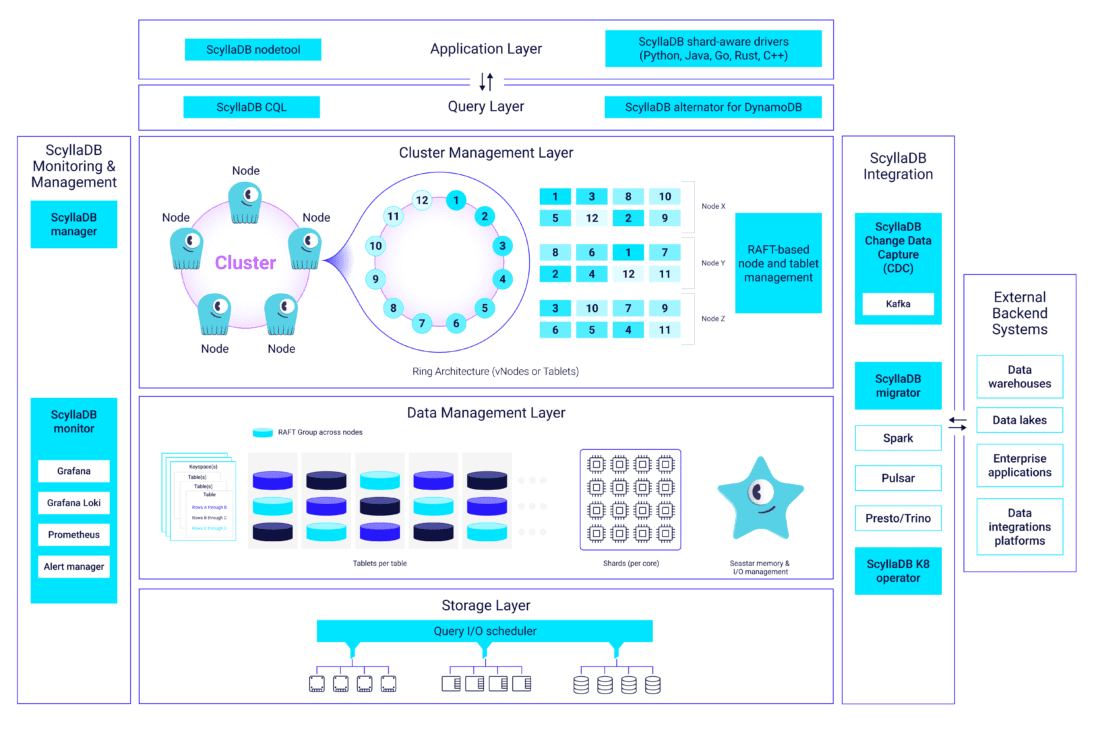

| Node | These can be individual on-premises servers or virtual servers (public cloud instances) composed of a subset of hardware on a larger physical server. The data of the entire cluster is distributed as evenly as possible across those nodes. Further, ScyllaDB uses logical units, known as virtual nodes (vNodes) or, alternatively, Tablets in the most recent versions, to distribute data more evenly. A cluster can store multiple replicas of the same data on different nodes for reliability. ScyllaDB nodes can be scaled up before scaling out to reduce complexity and improve cloud resource utilization expenditures through the use of larger processor instances. |

| Shard | ScyllaDB divides data even further, creating shards by assigning a fragment of the total data in a node to a specific CPU, along with its associated memory (RAM) and persistent storage (such as NVMe SSD). Shards operate mostly independently, known as a “shared nothing” design. This greatly reduces contention and the need for expensive processing locks. More on how ScyllaDB maps shards to CPUs can be found here. |

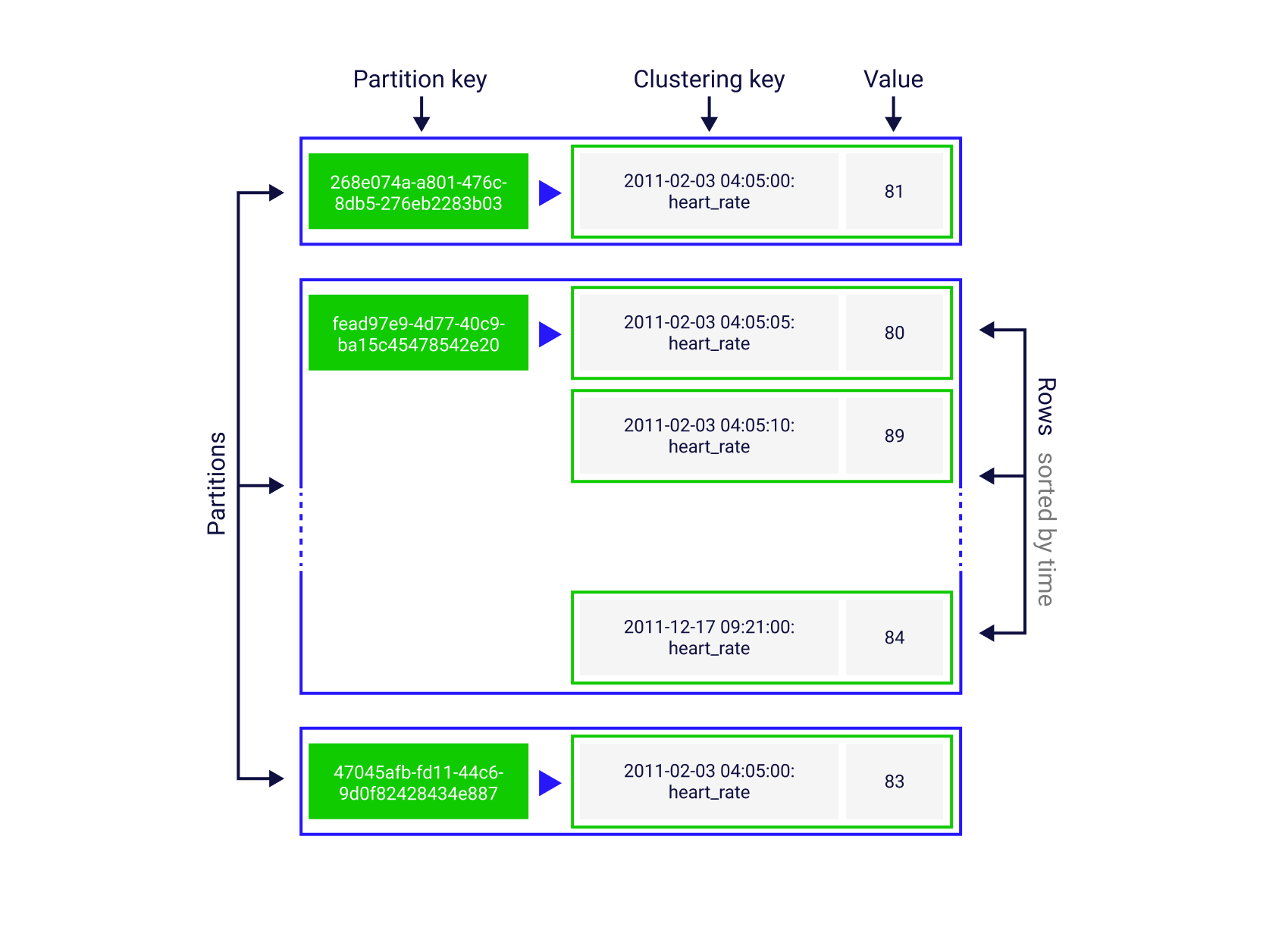

| Data Model | ScyllaDB’s data model has led to its being called a “wide column” database, though we sometimes refer to it as a “key-key-value” database to reflect the partitioning and clustering keys. It is synonymous with Cassandra’s data model, and parallels can be drawn to the DynamoDB data model. Compatibility and extensions for all CQL commands can be found here in the Developer’s documentation. |

| Keyspace | The top level container for data defines the replication strategy and replication factor (RF) for the data held within ScyllaDB. For example, users may wish to store two, three or even more copies of the same data to ensure that if one or more nodes are lost their data will remain safe. |

| Table | Within a keyspace data is stored in separate tables. Tables are two-dimensional data structures comprised of columns and rows. Unlike SQL RDBMS systems, tables in ScyllaDB are standalone; you cannot make a JOIN across tables. |

| Partition | Tables in ScyllaDB can be quite large, often measured in terabytes. Therefore, tables are divided into smaller chunks, called partitions, to be distributed as evenly as possible across shards (vNodes or Tablets). With vNodes, these distributions are static and only established at bootstrap. However, with Tablets, these distributions can be continually adjusted to ensure proper balance and avoidance of hot spots. |

| Rows | Each partition contains one or more rows of data sorted in a particular order, making ScyllaDB more efficient for storing “sparse data.” |

| Columns | Data in a table row will be divided into columns. The specific row-and-column entry will be referred to as a cell. Some columns will be used to define how data will be indexed and sorted, known as partition and clustering keys. |

| Cell | Each Row holds the data in Cells, a cell for each column. A Cell can be of any datatype, like TEXT or Integer, or a Collection of basic data types or other collections. Users can also create User-Defined Datatypes, enriching the table scheme further. |

ScyllaDB includes methods to find particularly large partitions and large rows that can cause performance problems. ScyllaDB also caches the most frequently used rows in a memory-based cache, to avoid expensive disk-based lookups on so-called “hot partitions.”

Learn best practices for data modeling in ScyllaDB.

| Ring | All data in ScyllaDB can be visualized as a ring of token ranges, with each partition mapped to a single hashed token (though, conversely, a token can be associated with one or more partitions). These tokens are used to distribute data around the cluster, balancing it as evenly as possible across nodes and shards. |

| vNode | The ring is broken into vNodes (Virtual Nodes) comprising a range of tokens assigned to a physical node or shard. These vNodes are duplicated a number of times across the physical nodes based on the replication factor (RF) set for the keyspace. |

| Tablet | As with vNodes, the ring can also be divided into Tablets, representing a range of tokens assigned to a physical node or shard. Based on the RF set for the keyspace, the Tablets are replicated across physical nodes. The key advantage of Tablets over vNodes is that this replication can happen not just at boot-strap but as needed without interrupting ongoing database operations. Tablets are all the same size, 5 GB, however, the number of them and how many nodes they're placed on when replicated can be varied, allowing smaller tables to be more efficiently replicated and not fragmented across all nodes. For a deeper dive into Tablets, see Avi Kivity's blogs. |

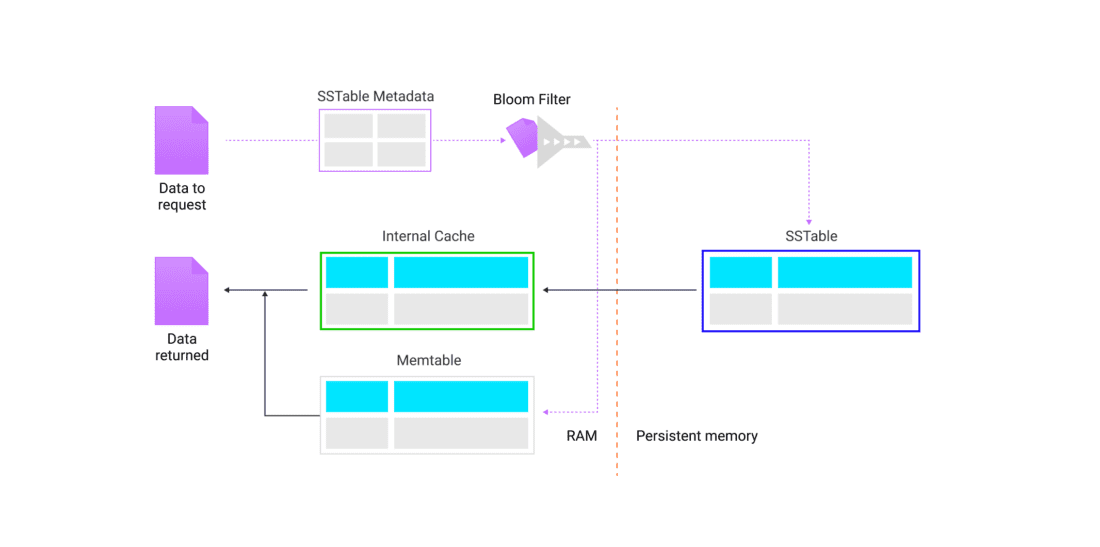

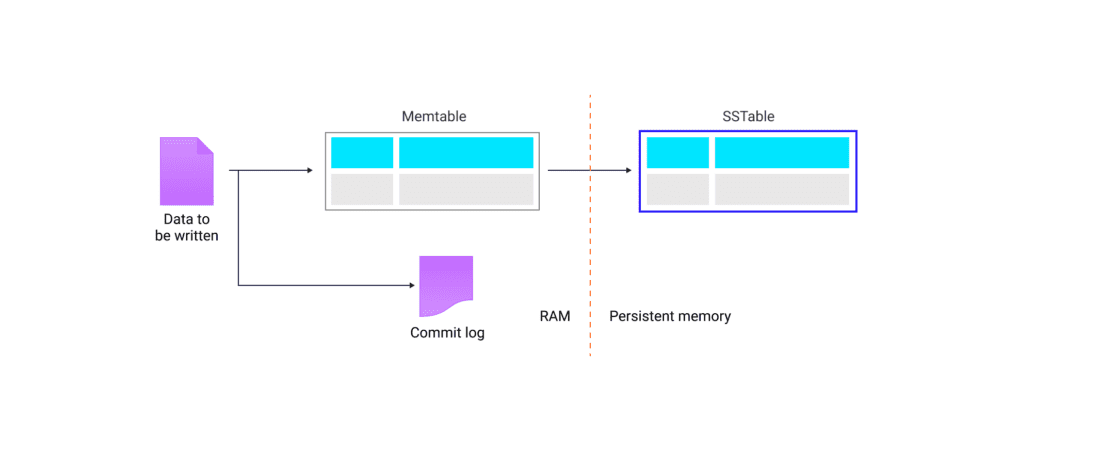

| Memtable | In ScyllaDB’s write path, data is first put into a memtable stored in RAM. Over time, this data is flushed to disk for persistence. Keyspaces, referenced above, are stored in SSTables, front-ended by a built-in integrated cache, a row-oriented memtable for accelerated reads and writes, accessed based on bloom filter probability of presence in a particular memory storage location (for reads). |

| Commitlog | An append-only log of local node operations. The data is written to commitlog simultaneously and in parallel with data sent to a memtable. This provides persistence (data durability) in case of a node shutdown; when a server restarts, the commitlog can be used to restore a memtable. |

| SSTables | Data is stored persistently in ScyllaDB on a per-shard basis using immutable (unchangeable, read-only) files called Sorted Strings Tables (SSTables), which use a Log-Structured Merge (LSM) tree format. Once data is flushed from a memtable to an SSTable, the memtable (and the associated commitlog segment) can be deleted. Updates to records are not written to the original SSTable, but recorded in a new SSTable. ScyllaDB has mechanisms to know which version of a specific record is the latest. |

| Tombstones | When a row is deleted from SSTables, ScyllaDB puts a marker called a tombstone into a new SSTable. This serves a reminder for the database to ignore the original data as being deleted. |

| Compactions | After a number of SSTables are written to disk ScyllaDB knows to run a compaction, a process that stores only the latest copy of a record, and deleting any records marked with tombstones. Once the new compacted SSTable is written, the old, obsolete SSTables are deleted and space is freed up on disk. |

| Compaction Strategy | ScyllaDB uses different algorithms, called strategies, to determine when and how best to run compactions. The strategy determines trade-offs between write, read and space amplification. ScyllaDB Enterprise even supports a unique method called Incremental Compaction Strategy which can significantly save on disk overhead. |

ScyllaDB completely bypasses the Linux cache during reads, using its own highly efficient row-based cache instead. A recent blog describes the design considerations that prompted this choice. This approach allows for low-latency reads without the added complexity of external caches.

ScyllaDB’s read path fetches data, preferably from its memory cache if the data is there and, if not, from the persistent storage as an asynchronous continuation task using the Seastar framework. Seastar-driven fetches execute in a microsecond without blocking, heavyweight context switches, or waste. ScyllaDB uses a bloom filter to determine the probability of where a specific range of data will be in the memtable or SSTable on disk to maintain efficient read performance and avoid unnecessary SSTable scans.

ScyllaDB’s write path puts data in an in-memory structure called a memtable and also durably writes the update to a persistent commitlog. In time, the contents of the memtable are flushed to a new, immutable persistent file on disk, known as a Sorted Strings Table (SSTable).

| Drivers | ScyllaDB communicates with applications via drivers for its Cassandra-compatible CQL interface, or with SDKs for its DynamoDB API. You can use multiple drivers or clients connecting to ScyllaDB at the same time to allow for greater overall throughput. Drivers written specifically for ScyllaDB are shard-aware, thus allowing for direct communication with nodes wherein data is stored, thus providing faster access and better performance. You can see a complete list of drivers available for ScyllaDB here. |