An online sports apparel powerhouse, Fanatics has to power a fleet of always-on scalable ecommerce sites listing wares for hundreds of sports franchises from over 1,000 vendors, and to track the shopping carts of millions of demanding sports fans. Founded in 1995, the privately held company grew dramatically over the past quarter century. It is now valued at $4.5 billion and sells $2.6 billion in merchandise annually.

Fanatics have official licensed merchandising deals with the vast majority of professional sports leagues, from the NFL, MLB, NBA, NHL, NASCAR, and more. Critical to their continued success has been the strong vision of Matt Madrigal, Chief Technology and Product Officer, and his technology team to build a Cloud Commerce tech platform that allows the company to meet ever-changing market conditions. As he told the Wall Street Journal in 2018, “the emotions of sports move just as fast as the action on the field, so we need a platform that reacts in real time.”

Just a sample of the many official sports merchandising websites Fanatics runs for professional and collegiate sports leagues on top of their own fanatics.com shop.

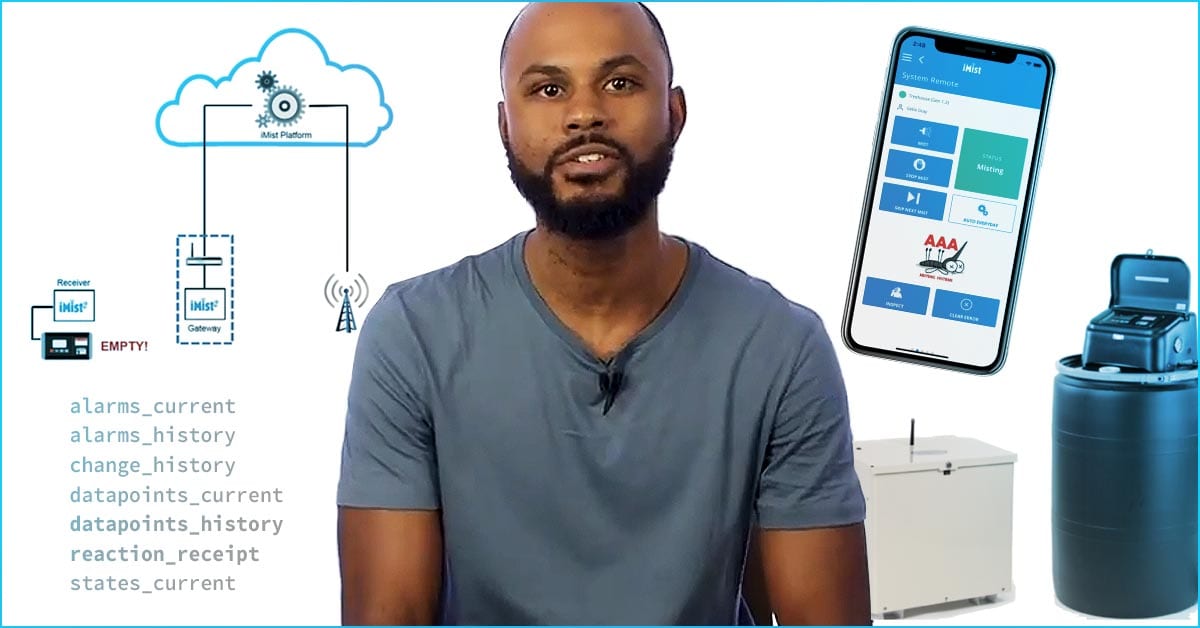

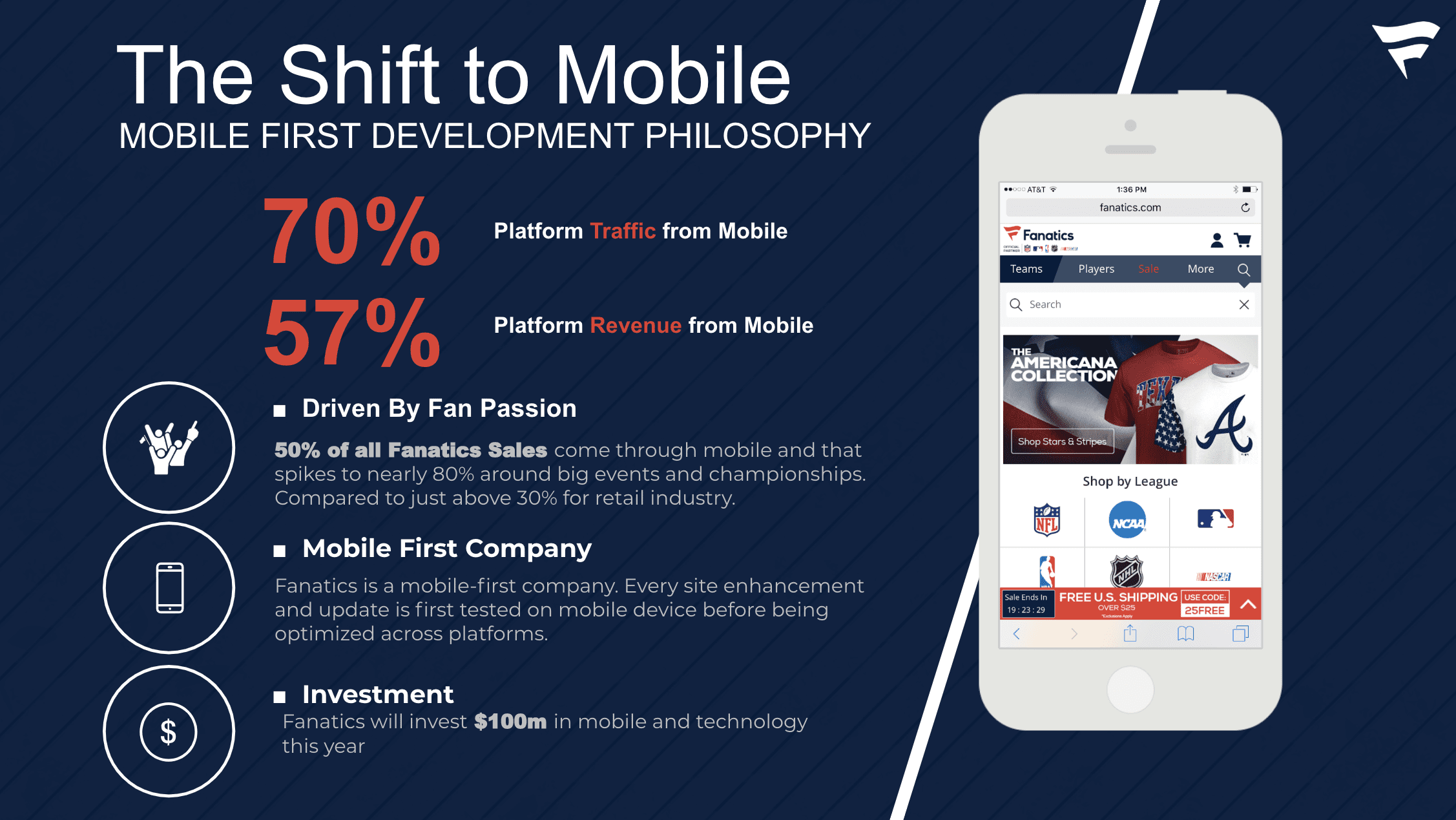

Fanatics has a mobile-first strategy — more than half of normal purchases come in via mobile, a number that skyrockets to 80% during significant “hot market” events (such as the World Series or Super Bowl).

Niraj Konathi, Fanatics’ Director of Platform Engineering, spoke at ScyllaDB Summit 2019 providing the audience a glimpse into their platform. He described how Fanatics uses ScyllaDB for order capture, to handle abandonments and perform data mining. Niraj also shared their “practical engineering” lessons and how Fanatics wrote their own management tool for cluster automation and autoscaling on AWS.

Fanatics sees 57% of its overall platform revenue from mobile. This number rises to almost 80% during hundreds of “hot market” events that occur each year.

2015: The Move to the Cloud, and from MySQL to Cassandra

Fanatics’ journey to the cloud began in 2015 when, to meet their scalability demands, they made the move from their on-premises infrastructure. At the same time they also made the move from MySQL to a new stack powered by a highly scalable NoSQL database — Apache Cassandra.

Cassandra served as the core of the primary store: order capture, order management, shopping carts and order mutations (order changes), accounts and users, loyalty and promo programs, order visibility and even rate limiting.

While this strategy worked for Fanatics, it was not without the typical Cassandra pain points: node sprawl requiring a large cluster size, frequent garbage collection (GC) pauses, CPU spikes during compactions — all of which led to timeouts. Niraj ruefully admitted, “We did not realize how bad it could get.”

For example, with cart mutations (changes to a shopping cart), Niraj painted the picture: “So large wide rows. Think about it. Every time a user comes online they change their carts. They add new items to that cart. And every time we actually stored the cart mutation because of analytics as well as for debugging.” They wanted to know why the user abandoned their cart and left the Fanatics.com site.

Unfortunately the underlying Java Virtual Machine (JVM) was causing frequent GC pauses. Which forced Fanatics to continue to expand multiple times; overprovisioning to try to surmount the problem. Their increased cluster size came with commensurate AWS EC2 costs, greater TCO and long periods of time spent maintaining the cluster.

2019: Migrating from Cassandra to ScyllaDB in Production

The remedy to these pain points was ScyllaDB. “Just moving one use case [cart mutations] to ScyllaDB this year we got a huge benefit out of it.” Out of total cluster size of 55 Cassandra nodes, Fanatics were able to reduce 43 nodes of Cassandra to 6 nodes of ScyllaDB, dramatically reducing their EC2 bill. The workload on the remaining 12 nodes of Cassandra will be moved onto the 6 existing ScyllaDB nodes once Change Data Capture (CDC) reaches General Availability. (Note: CDC was released as an experimental feature in ScyllaDB Open Source 3.2.). This node reduction dramatically reduced their EC2 bill.

Performance was smooth and error-free even in their bursty traffic environment. “We had a hot market last week. During the peak minute we saw close to 180,000 IOPS… and we had zero timeouts.”

This reliable performance made both customers and the Fanatics application teams happier.

Part of the migration also meant retooling administrative tasks. “We have built a lot of tools. Part of my team also controls the pipeline for deploying applications in the cloud. So we have built home-grown tools. We call it Cloud Keeper.” Fanatics have automated in such a way that “with just two commands from Slack… you can bring up an entire ScyllaDB or Cassandra cluster.”

Their automated provisioning follows regular procedures, such as securing deployments with node-to-node and client-to-node encryption. Their data at rest is also encrypted.

Fanatics also use AWS Auto Scaling Groups and ASG Hooks to find out what happened to cause a node to go down or if they want to recover data from the node.

Their ScyllaDB Monitoring Stack is hooked up to PagerDuty, and they use ScyllaDB Manager for repairs.

Elastic Network Interfaces (ENI) are an AWS method to create a logical networking component for a Virtual Private Cluster (VPC); you can attach them to an instance. Fanatics uses these to provision clusters. When a seed node comes up, it is attached to an ENI, which then broadcasts its static IP address. This allows non-seed nodes to join the cluster during bootstrapping. They can also use this same method when a seed node goes down and a new seed node comes up. It will reattach to the same ENI, which maintains the same IP address. This means that they do not need to go to all other non-seed nodes to update IP addresses, thereby smoothing the recovery process.

Fanatics home-grown tools, System Manager and Commando, automate and perform other administrative tasks for ScyllaDB and Cassandra clusters.

Commando is a UI-based tool somewhat akin to DataStax OpsCenter. They created it around the time they moved from Cassandra 2.x to 3.x. Commando can be used to manage stateful and stateless applications. It relies on an underlying RESTful request service, Apache Kafka for a pub-sub mechanism, RDS for persistence and TLS for secure communication. It can manage rolling restarts, centralize logs from VMs based on DNS, and even apply patches to stateful systems. Niraj was hopeful they can release their work as an open source project some time in 2020.

Watch the Full Video

Want to learn more? You can watch the full video of Niraj presenting at our ScyllaDB Summit 2019 below and also see his slides on our Tech Talks page.

Editor’s note: This blog was updated with a clarification to the node reduction between Cassandra and ScyllaDB. The article incorrectly stated the reduction was from 55 Cassandra nodes to 12 ScyllaDB nodes. The actual reduction at present is from 43 Cassandra nodes to 6 ScyllaDB nodes. The remaining 12 nodes of Cassandra will shift their workload onto the existing 6 ScyllaDB nodes once the Change Data Capture (CDC) feature reaches General Availability. At that time, the total node reduction will be from 55 Cassandra nodes to 6 ScyllaDB nodes.