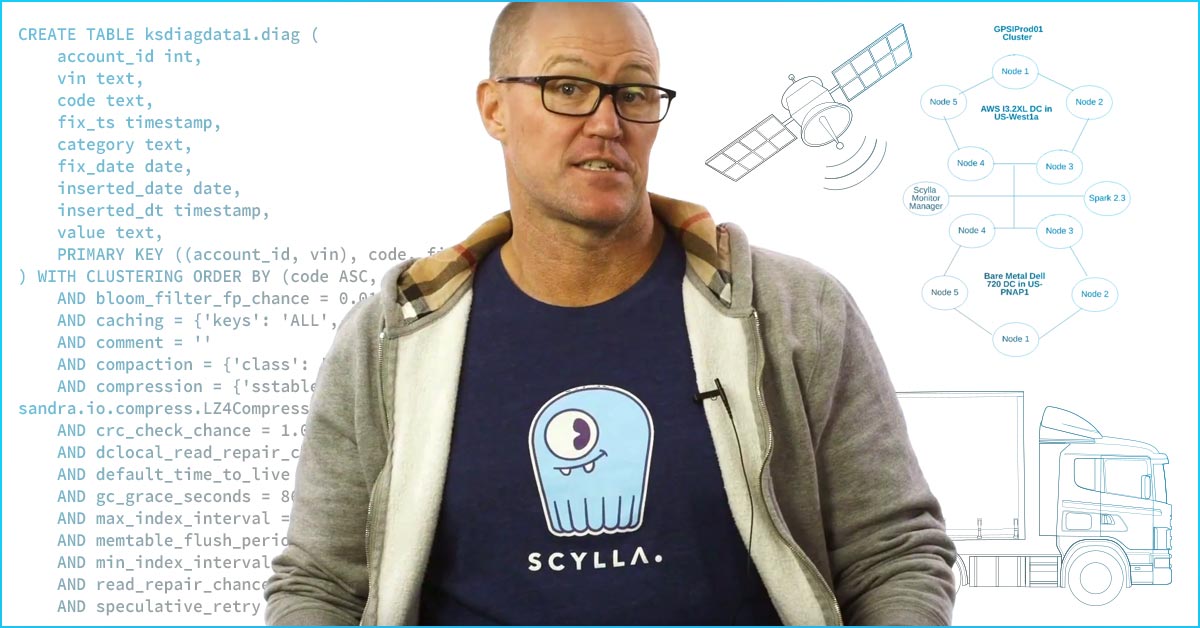

I am an architect at SteelHouse, an Ad Tech platform. Our ad serving platform is distributed across the United States and the EU, and we strive to deliver the best and highest-performing real-time digital advertising possible.

Our ability to serve ads and log web events, to consume metadata and make decisions on it, is what drives the whole platform. There’s no point in serving an ad if we don’t analyze all the data up front to ensure we’re serving an appropriate ad on behalf of our customers. It’s also vital we deliver an experience that’s relevant to a consumer’s interests and increases their engagement.

Our stack is comprised of NoSQL databases, with Kafka for all of our messaging. Our services run on the public cloud, with Kubernetes as our container management for applications. The biggest driver of SteelHouse’s data requirements is what we call our pixel server. It handles billions of requests a month. Being so complex, the system is extremely sensitive to latency.

We used Cassandra for seven years as the backend data store for our globally distributed systems. Our Cassandra clusters habitually generated reams of timeouts, and it wasn’t possible for us to maintain our expected timeout SLA. At that point, we knew it was time to try something else. Things were not looking good with the Cassandra cluster, and if we switched over, they probably couldn’t get any worse!

One of our senior engineers, Dako Bogdanov, who’s well-versed in databases and C++, took a close look at ScyllaDB and said, “Okay. These guys are the real deal.” Since he spends a lot of time tuning our data warehouse, our Postgres databases, he gave the rest of us confidence. When he sees something that he likes, it’s easy for the rest of us to get on board.

We had gotten used to the extensive and sometimes painful tuning required by Cassandra and the JVM. But once we set up the first ScyllaDB cluster, we realized that ScyllaDB mostly tunes itself. That seemed like a pretty impressive feat, knowing that the system could be installed, auto-tune, and be ready for a production workload. With Cassandra, the JVM tweaks and tuning are all manual and quite brittle. We were heartened to know that the engineering team at ScyllaDB had put a lot of effort into making sure their product will perform well out of the box.

Once we saw how easy ScyllaDB is to install, how easy it is to tune, and how great the performance is, we consolidated a few workloads from Cassandra. With ScyllaDB, there’s less hardware to manage. That means fewer things can go wrong. With fewer boxes that might fail, our lives suddenly became much simpler.

ScyllaDB’s performance was as good as advertised. As soon as we deployed ScyllaDB, we saw a great improvement in terms of fewer timeouts being logged. The responsiveness and performance of applications improved as well. Once we switched from Cassandra over to ScyllaDB, timeouts for those very sensitive systems simply disappeared. It was like night and day.

The switchover was a little intense and we were all nervous, but it ultimately worked out really well. Over the next few months, we started migrating some of the remaining clusters to ScyllaDB. Black Friday/Cyber Monday is SteelHouse’s peak season, driving three to four times our normal volume. ScyllaDB provided consistent, reliable performance throughout the entire holiday season.

People on the business side commented that it was our smoothest holiday season on record. It also turned out to be our largest holiday season ever. The fact that from a business perspective we performed better than we had ever before, and from a technical perspective, the systems were more stable with more load than ever before, is a true testament to ScyllaDB.

Today, ScyllaDB is handling billions of requests that we serve every month, through write/reads and writes. For every request that comes to our pixel server, there are multiple reads and writes. ScyllaDB easily handles the nine billion or so requests that might be interacting with our backend at any given time.

Our team now saves three to four hours a week that’s no longer being spent on Cassandra maintenance. As a mixture of scheduled and unscheduled work, we’re getting back roughly 20% improvement in team productivity.

We knew we were taking a risk when we migrated from Cassanadra to ScyllaDB in production. But Cassandra pushed us to the edge, and our faith in ScyllaDB turned out to be well-founded. Production migrations aren’t for everyone, so I do recommend that you look into migrating over to ScyllaDB while you still have some time, margin for error, and a safety net!