Counters: a special type of column

Counters are a special type of column that allows its value to only be incremented, decremented, read or deleted. Updates to counters are atomic, which makes them a perfect solution for counting—something that is otherwise difficult to do efficiently.

For example:

> CREATE TABLE cf (pk PRIMARY KEY, my_counter counter);

> UPDATE cf SET my_counter = my_counter + 6 WHERE pk = 0

> SELECT * FROM cf;

pk | my_counter

---+-------------

0 | 6

(1 rows)

> UPDATE cf SET my_counter = my_counter - 1 WHERE pk = 0

> SELECT * FROM cf;

pk | my_counter

---+-------------

0 | 5

(1 rows)As implemented in ScyllaDB, counters are compatible with Cassandra 2.1 or later and the sstable format is exactly the same.

It is worth noting, however, that counters have some important limitations not present in other column types:

- once deleted, counter column value cannot be used again—this is a consequence of the fact that counters can only be incremented or decremented, they cannot be set to any specific value

- a table cannot contain both counter and regular columns—without this limitation it wouldn’t be possible to provide proper atomicity and isolation guarantees for updates that modify both counters and regular columns in a single row

- counter columns cannot be members of primary key

- updates are not idempotent – in case of a write failure the client cannot safely retry the request

- counter columns cannot have time-to-live set

Implementation

ScyllaDB implements counters using state-based conflict-free replicated data types (CRDT), which allow atomic operations, like increment or decrement, to be performed locally without the need for synchronization between nodes. The consequence of using the state-based variant of CRDTs is that at a certain point the write path, counter updates become idempotent. Just like modifications of other column types, they can be applied more than one time, without corrupting the counter value. While it doesn’t change the fact that the client cannot safely retry counter updates, most of the database logic, except for the initial steps of processing counter modifications, doesn’t need to have any additional special cases.

Internal representation

Even though a counter column, when queried, appears to be just a 64-bit signed integer, it is represented by a more complex data structure. Each counter value is a set of counter shards. Counter shard is a triple containing:

- node id – a unique identifier of the node owning this shard

- logical clock – which is incremented each time the owning node modifies the shard value

- current value – the sum of all increments and decrements performed by the owning node

Counter Write

It is important to explain what it means exactly for a node to own a counter shard. During each write operation one of the replicas is chosen as a leader. The leader reads its counter shard, increments logical clock, updates current value and then sends the new version of its shard to the other replicas. In other words, during any counter write the update is applied only to a single counter shard and each counter shard is modified always by the same replica thus avoiding the need for much more complex and expensive consensus protocols such as Paxos. However, because the leader needs to read, modify and write a counter shard it needs to ensure that there is no race with any other concurrent counter update. That requires locking, which is done locally and with cell granularity which means that the impact on the maximum concurrency is minimal.

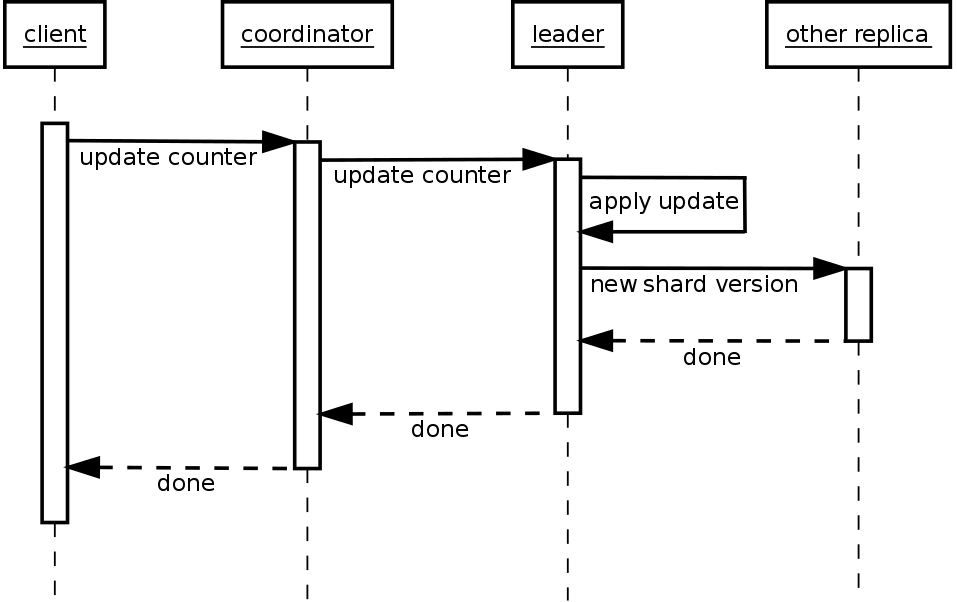

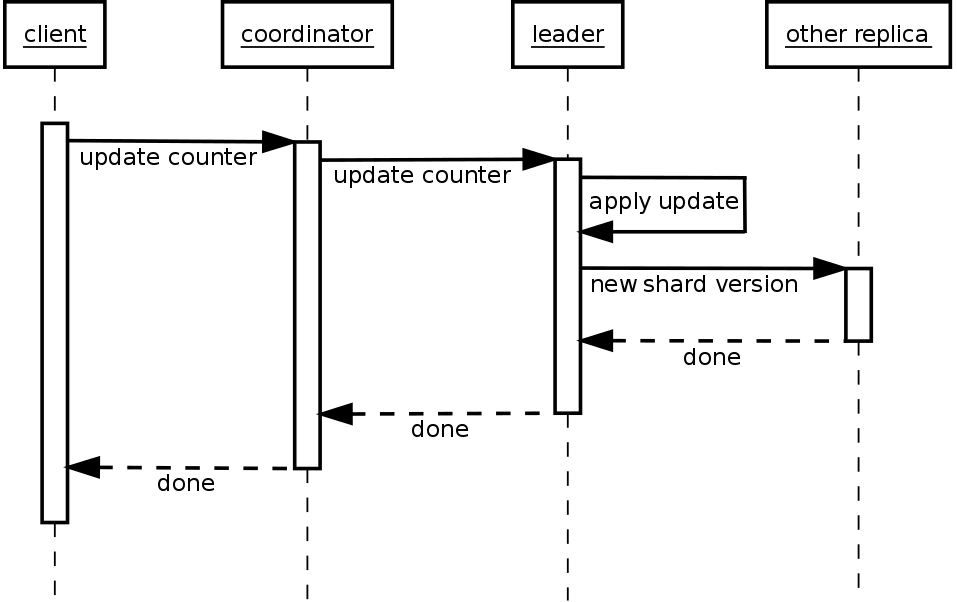

The diagram below shows the steps performed by the cluster during counter write.

Counter updates start when the client sends an update request to the coordinator. At this point, the update is represented as a delta.

- The coordinator chooses a leader for the counter update and forwards to it the request. The leader is always one of the replicas owning the partition that the counter cell belongs to, preferably the one in the same data center as the coordinator.

- Now, the leader needs to transform the received counter delta to a counter shard so that it becomes idempotent. To do this, the leader reads the value of the counter shard, and a counter shard with that value is modified by the delta that is created. This read-modify-write is done under local lock.

- The leader sends the new version of its counter shards to other nodes for replication. At this stage, replication is already very similar to the modifications of other column types, that includes how consistency levels work. The only difference is that one replica (the leader) already has successfully applied the update.

- Each node keeps both its own counter shard as well as shards of all other replicas so that all information needed to perform a read is kept locally.

Reads

Reading a counter column value is not much different than reading a value of any other column except for the deserialization step. During the deserialization step, the values of all counter shards in the set are summed together into a single 64-bit signed integer that is sent back to the client. As mentioned before, nodes also keep counter shards of other replicas and have full information of the state of the counter. Obviously, standard limitations of eventual consistency still apply, there may be a counter update executed with another node as a leader that hasn’t yet reached the node at the time it performs read query.

Conclusion

Counters are undoubtedly a very important feature. ScyllaDB 1.7 provides experimental support for them (with some known inefficiencies) while a full and much more efficient implementation is expected in release 1.8. When that happens, counting things will no longer be a difficult problem.

Stay tuned for a follow-up blog post in which performance of the ScyllaDB’s counter implementation will be explored.