Learn how to navigate some of the sneakiest aspects of DynamoDB pricing

DynamoDB’s pricing model has some head-scratching quirks that slyly inflate bills by hundreds of thousands of dollars per year. Most of these aren’t malicious; they’re just design decisions from 2012 that made sense at the time, but became increasingly absurd at scale.

This post walks through four of the most egregious examples and the real cost impact on teams running large workloads.

Cost per item size is punitive

DynamoDB charges you for writes per 1KB chunk and reads per 4KB chunk. This means:

- 1KB write = 1 WCU

- 1.1KB write = 2 WCUs (you’re charged for 2KB, but only used 1.1KB)

- 1.5KB write = 2 WCUs

- 2.1KB write = 3 WCUs

Every byte over a threshold doubles your cost for that operation. It’s a tax on items that don’t fit neatly into the billing boundary. And almost nothing fits neatly: JSON payloads with nested objects, variable-length strings, metadata, timestamps… Most real-world items end up hitting those boundaries, so you risk paying 2x or more for the overage.

Consider a team logging 100M events per day, averaging 1.2KB each. That’s ~120M writes, almost all hitting the 2KB billing threshold. They’re paying for 200M KB instead of 120M KB. That’s a 67% surcharge baked into every bill. If their write cost is $10,000/month, that surcharge alone is ~$6,700/month in wasted capacity.

On demand comes at a premium

On-demand pricing was introduced as a convenience layer for unpredictable workloads. It saves teams the pain of provisioning and forecasting (“just pay for what you use”). The trade-off is that pricing is steep.

Even after AWS’s recent price cut (it used to be ~15x!), on-demand is 7.5x more expensive than provisioned capacity. For a team that starts on on-demand and never switches, the cost difference is catastrophic.

For example, say a SaaS company launches a new product on DynamoDB; they start with on-demand for convenience and quickly scale to 20K reads/sec and 20K writes/sec. On-demand now costs $39K/month. Switching to provisioned would drop that down to $11K/month. And teams often don’t switch because ‘it works’ or ‘the bill surprise hasn’t happened yet.’ The convenience tax on DynamoDB is insane.

Even if you wanted to retain that flexibility, ScyllaDB would cost $3K/month for on-demand or just $1K/month with a hybrid subscription + flex component.

Multi-region network costs are deceptive

Global Tables already charge replicated writes (rWCUs) at a premium. But there’s a second hidden cost too: data transfer. AWS charges for cross-region data transfer at standard EC2 rates: $0.02/GB to adjacent regions, up to $0.09/GB to distant regions. As a result, Global Tables end up costing 2-3x more than expected.

These hidden network costs often don’t appear as a line item on your DynamoDB bill. They’re rolled up into ‘Data Transfer’ charges. Many teams don’t notice or attribute it correctly.

ScyllaDB can’t escape the variable costs of cross-region data transfer that AWS enforces. However, we have a number of cost reduction mechanisms that assist with these costs. ScyllaDB handles multi-DC replication natively. You provision nodes in each data center, and replication is built into the protocol. There are also shard-aware and rack-aware drivers, which help minimize network overhead. Add network compression, and your cross-region data costs get even lower.

Reserved capacity requires you to predict capacity

Reserved capacity offers massive discounts, up to 70% off. But there’s a catch: you must commit for 1 or 3 years upfront, and you must predict your read and write throughput independently.

This is absurdly difficult. Your workload changes: new features launch, old features get deprecated, customer behavior shifts, and traffic patterns evolve. Predicting the exact read/write ratio years out is impossible. Teams either over-commit (wasting money on unused capacity) or under-commit (paying on-demand rates for the overage).

Example: You commit to 200K reads/sec and 500K writes/sec for 1 year. On DynamoDB, that is going to cost $1.4M/year for the upfront and annual commitment. But six months into the year, growth exceeds your capacity estimates and your application starts having requests throttled. You revert to autoscaling a mixture of reserved plus on-demand. Now, you’re paying the 7.5x markup – and that costly misjudgment is locked in for the remainder of the year.

The solution? Over-commit to hedge your bets. This guarantees you’re wasting money on overprovisioning, just to avoid even higher on-demand charges. It’s a no-win scenario.

Compare this to ScyllaDB with a hybrid subscription + flex component that automatically scales to your requirements throughout the year, which might cost $133K/year to start with. Radically less expensive and more flexible (on both compute and storage requirements) thanks to true elastic scaling with X Cloud.

Why does this matter?

These four pricing quirks aren’t hypothetical. Combined, they add tens of thousands to six figures per year to bills across the industry. They’re especially brutal for write-heavy workloads, multi-region systems, and large items. And because they’re partially hidden, buried in separate line items, masked by the per-operation model, or justified by architectural constraints… Teams often don’t realize how much they’re paying.

Some of this is inevitable with a fully managed service. But databases built on different cost models can deliver the same durability, consistency, flexibility and scale at a fraction of the price. For example, this is the case with ScyllaDB, which charges by the node and includes replication and large items at no extra cost.

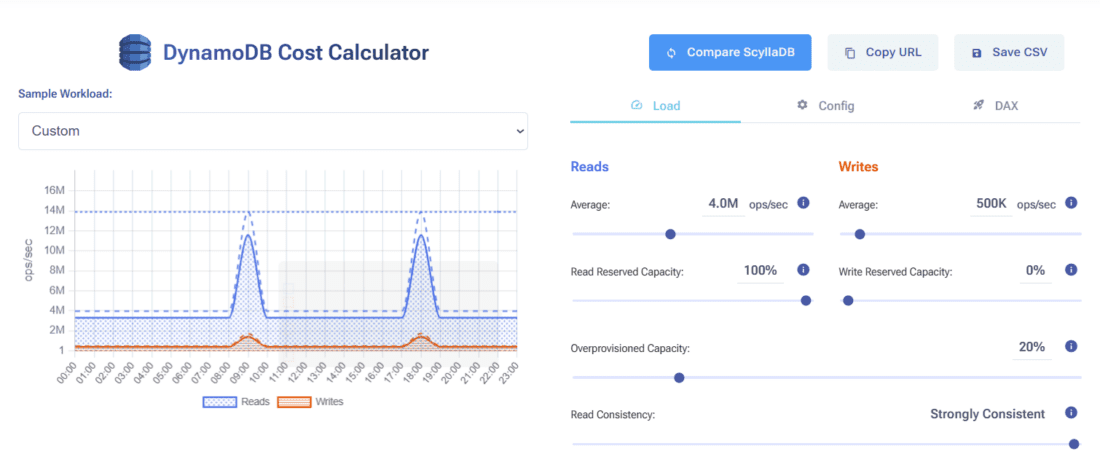

Curious what your workload actually costs? Use the ScyllaDB DynamoDB Cost Calculator at calculator.scylladb.com to model your real costs, including all the hidden charges, and see how ScyllaDB pricing stacks up.