How we used AI-assisted invariant-based testing to locate and resolve tricky hidden bugs with complex state transitions

Seastar is a high-performance C++ framework for writing asynchronous server applications. It powers projects like ScyllaDB and Redpanda. One of its core rules is simple but strict: no blocking allowed. Every operation that could take time (e.g., reading from disk, writing to a socket, waiting for a lock) must be expressed asynchronously by returning a future that resolves when the work is completed.

This makes Seastar applications extremely efficient on modern hardware. However, it also means that even seemingly mundane things, like writing data to a stream, require careful thought about ownership, lifetimes, and buffering. Moreover Seastar’s output stream has always experienced a limitation: the inability to freely mix small, buffered writes with large, zero-copy chunks. It was something that developers avoided and tolerated – but we always considered it something worth improving … someday.

Fixing this requires a deep dive into complex state transitions, which inherently creates a high risk for introducing sequencing bugs. A standard coding approach won’t work; the task requires a way to trace the system’s state across millions of test cases. This post describes the process of using AI-assisted invariant-based testing to try to locate and resolve these tricky hidden bugs. TL;DR What could have been an extremely complicated fix ultimately was actually surprisingly smooth and effective.

Output streams

Output stream is Seastar’s output byte flow abstraction. It’s used wherever data needs to go out of an application. For example, it’s used for disk files, network connections, and stackable virtual streams that transform data on the fly (such as compression or encryption layers sitting on top of another stream). Whatever the underlying sink is, the output stream presents a uniform interface to the caller.

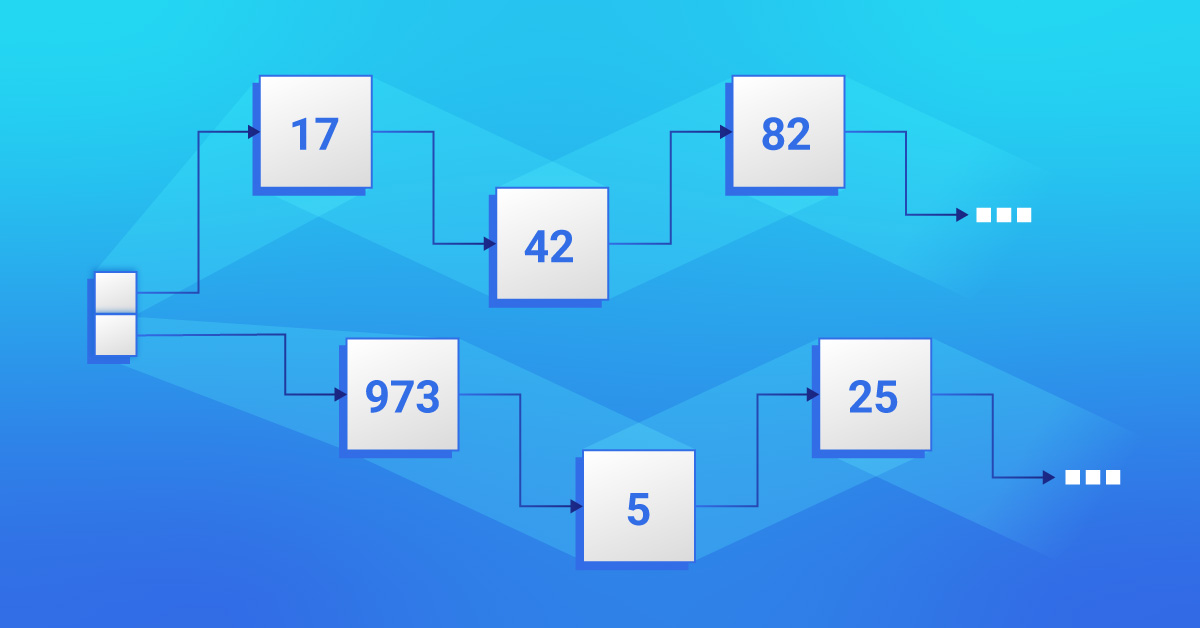

It gives callers two ways to push data through:

- Buffered writes: Copy bytes into an

internal buffer; flush when the buffer fills up or when explicitly requested. - Zero-copy writes: Hand over memory buffers directly; the stream passes it to the sink without copying a single byte of the buffer data.

Zero-copy is important for large blobs since we want to avoid copying megabytes of data. Buffered writes are important for building up small pieces efficiently. In a real application, it’s natural to interleave both: write a small header into the buffer, then attach a large payload as a zero-copy buffer, then write a small trailer.

There is also a trim_to_size stream option. When enabled, the stream guarantees that no chunk delivered to the underlying sink exceeds the configured stream buffer size. This matters for sinks that have an upper limit on how much data they can accept in a single call – certain network APIs, for instance, or aligned disk I/O. Without it, a larger buffer can pass through as-is.

The Problem

Until recently, mixing the two write modes was not supported. Internally, buffered and zero-copy writes used two different storages: internal buffer for the former data, and dedicated container for the latter. There was no clean way to append buffered bytes onto the tail of pending zero-copy data while preserving ordering. The code simply asserted that the zero-copy container was empty whenever a buffered write arrived and vice-versa. The nearby code comment, however, stated that mixing writes was not supported yet – so the intention to fix it had always been there. The goal of the work described here was to make it happen.

Start with the Tests

We figured we should build a solid test foundation before touching the implementation. We had some pre-existing tests for output streams, but they were really just a collection of ad-hoc cases (specific input sequences with hardcoded expected outputs). This was fine for catching regressions but not great for systematically exploring the large space of possible inputs against drastic code changes.

The new approach was invariant-based testing. Rather than checking exact output sequences, the tests need to verify that certain properties always hold, regardless of input. Specifically, we wanted to check that:

- All written bytes arrive at the sink, in order, with no corruption.

- Every chunk delivered to the sink (except the last) must be at least

stream_sizebytes with no undersized non-last chunks. - With the trimming option enabled, all outgoing chunks must be exactly

stream_sizebytes.

With these invariants defined, the test iterates over all combinations of chunk sizes (1 byte through 3x times the stream_size bytes) and all assignments of write type (buffered or zero-copy) to each chunk. For n chunks ,that’s 2^n type patterns plus trimming option giving about 1.6 million combinations in total. The ad-hoc tests were then removed – the invariant test subsumed them.

One practical issue: 1.6 million cases ran fast in a regular build (~5 seconds), but under sanitizers (ASan, UBSan) it ballooned to over two minutes. Given the whole seastar test suite runs for several minutes, this new timing had to be improved somehow. The fix was to turn an exhaustive test into a fuzzy one: in debug builds, shuffle all 2^n masks, always keep the all-buffered and all-zero-copy patterns, and sample ~10% of the rest. That brought sanitizer runs down to less than twenty seconds.

Implementing the Fix

With tests in place, the implementation work began. The key challenge was making the internal buffer and zero-copy container interoperate cleanly. Two transitions required handling:

- Buffered → zero-copy

- Zero-copy → buffered

Buffered → zero-copy

When a zero-copy write arrives and there’s buffered data. That data needs to be folded into the zero-copy container so that ordering is preserved. The naive approach – trim buffer to its filled length and move it into container – works, but it wastes the rest of the buffer allocation. Instead, the filled buffer prefix is shared into the container as a view or sub-span, and the buffer itself is advanced past it, thus sharing the underlying memory. This way, the tail of the original allocation is still available for future buffered writes after the zero-copy sequence. No reallocation is needed on the mode switch.

This tail – trimmer buffer, pointing at unused capacity within the original allocation – is what we call the remnant. It is a new concept introduced by this change. Before mixed-mode writes were supported, the buffer was always either full (and flushed) or freshly allocated. The remnant is an optimization. But (as will become clear shortly), its existence also introduced several subtle failure modes that took time to track down.

Zero-copy → buffered

When a buffered write arrives and the zero-copy container is non-empty, the new data can just be appended to the internal buffer regularly. A new zero-copy arrival, if it happens, would keep the buffer ordering as described above. The flushing path was also updated to handle the case where buffered and zero-copy data are both available at that time. The buffered tail is folded into the zero-copy vector before forwarding both downstream.

Bugs Found Along the Way

Once the implementation was written, the tests were run. They failed – many times, in different ways. To facilitate debugging, each test failure logged the exact stream configuration and the write sequence that triggered the problem, for example:

stream_size=5 trim_to_size=0

input_chunks=[3, 2]

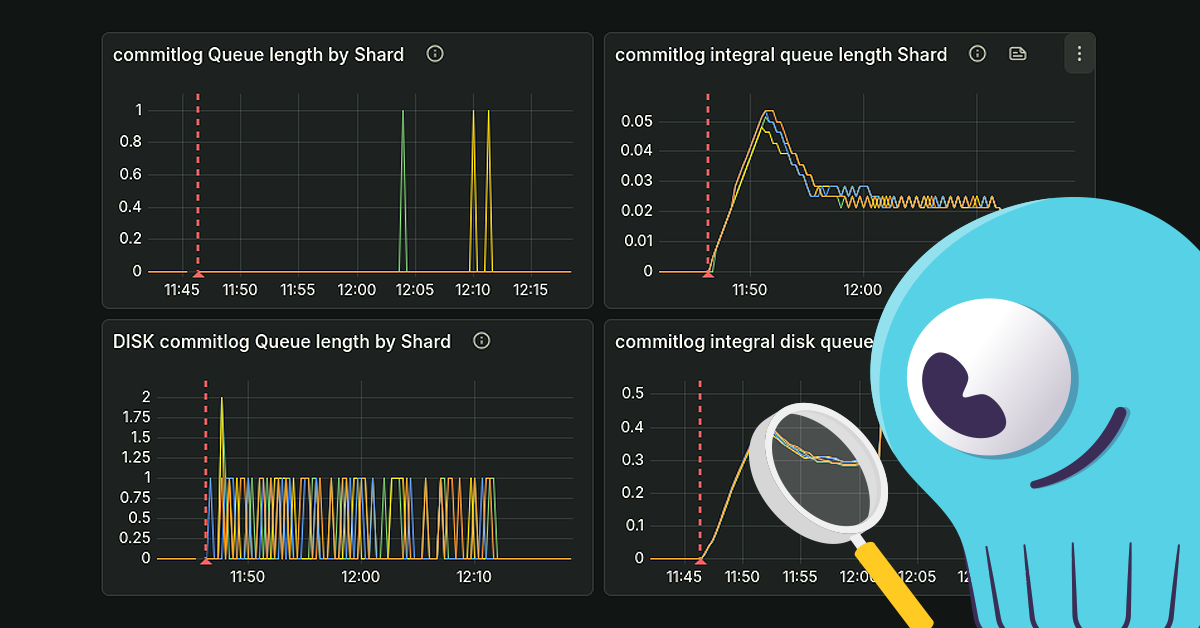

write_types=[buffered, zero_copy]This made the diagnosis unusually direct. Given a specific failing case, we could trace the execution step by step – mentally simulating the stream, tracking the bytes accumulated in the buffer and zero-copy container state at each point, and comparing that against what the state should be at that moment. The stream’s logic is not huge, but it has enough branches and interacting state variables to make it excessively time- and effort-consuming for a human.

So, we turned to AI to hopefully save time and effort. Each time a test failed, instead of walking the input_stream state step-by-step, we prompted the AI with the exact test-case description string, the code itself, and the request to find where the problem could be. The AI then started “thinking” and its mind-flow looked as if it was simulating the input_stream code execution step-by-step, like a developer would do, just much faster.

This turned out to be an incredibly fast way to find where the invariant breaks. In many cases, it could correctly analyze the desired state the stream had to happen in. Sometimes, it even suggested excellent ways to fix the problem that was found. Identifying the root cause is often only half the work; understanding which fix is correct, safe, and doesn’t introduce new assumptions that will break later is the other half. AI found the problems for us and was quite helpful in helping us fix them.

For example, one of the first debugging sessions with AI looked like this…

Developer:

The test fails like that

fatal error: in "test_splitting_invariants": data integrity check failed: stream_size=5 trim_to_size=0 input_chunks=[1, 1, 1, 4] write_types=[zero_copy, buffered, buffered, buffered]Please, analyze

AI:

Let me trace through this case carefully.

stream_size=5, trim_to_size=false, writes: [zc(1), buf(1), buf(1), buf(4)]

After zc(1):

_zc_bufs = [tb(1)], _zc_len = 1, _end = 0_zc_len < _size, nothing flushed

After buf(1):

- Goes to

write(const char*, 1)fast path — but!_buf, so falls toslow_write _end=0, n=1 < _size=5, so skips the!_end && n >= _sizebranch- Allocates

_buf, copies 1 byte,_end = 1

After buf(1):

- Fast path: fits in

_buf, _end = 2

After buf(4):

_end=2, n=4, n > _size - _end = 3, so goes toslow_write_end=2, so skips the!_end && n >= _sizebranchnow = min(4, 5-2) = 3, copies 3 bytes,_end = 5now != n(3!=4), so allocatesnextwithmax(4-3, 5) = 5bytes, copies 1 byte into it_zc_bufsis non-empty, so enters the new branch:- seals

_buf.share(0, 5)into_zc_bufs _zc_len += 5 → _zc_len = 6_zc_len += n - now = 1 → _zc_len = 7- pushes

nextinto_zc_bufs trim_to_size=false →callszero_copy_put(_zc_bufs)

- seals

So zero_copy_put sends [tb(1), tb(5), tb(5)] — but next only has 1 byte of valid data in it, yet it’s a 5-byte allocation! We’re pushing the full _size-sized next buffer but only n - now = 1 bytes were written into it. The buffer isn’t trimmed before being pushed.

The fix: trim next to n - now bytes before pushing it into _zc_bufs when _zc_bufs is non-empty. Shall I go ahead and fix it?

The root-case was obvious and the suggested fix was simple and correct. So, we accepted the suggestion and the debugging session went on. We went through several rounds like that, with each step producing more lengthy analysis and more sophisticated fix proposals.

At some point, a test with a small buffered write followed by a zero-copy write showed data corruption downstream. Tracing the execution revealed that after trimming the internal buffer past the filled region, the trimmed buffer still appeared non-null even though it had zero usable capacity remaining. The fast-path check for buffer availability wasn’t prepared for that and didn’t trigger reallocation on the next write. As a result, bytes were written into unowned memory.

Another case involved a buffered write code branch which handles writes larger than stream_size by chopping them into chunks. After a mode switch, the internal buffer might become a small remnant – smaller than the tail chunk the code in question was trying to store there. Tracing through the code, we saw that it was the place where the capacity check wasn’t prepared to meet with the remnant buffer. It silently assumed that the internal buffer always had room for a full-sized tail. The result was an ASan-detected heap overflow.

A more subtle issue arose around the same remnant buffer in a different scenario. When buffered write chopping code encounters a tail chunk that is smaller than the stream_size, but larger than the remnant's remaining capacity, it has to make a choice. It could either fill the remnant partially and asynchronously put it before allocating a fresh buffer for the rest, or simply abandon the remnant and allocate a fresh full buffer.

The first option is more space-efficient, but would require an async flushing inside what is otherwise a synchronous setup step, significantly complicating the code. The second option wastes the unused bytes of the remnant's allocation – but crucially, it doesn’t leak them. The remnant shares its underlying allocation with the sealed buffer already in the zero-copy container, so the memory is freed once that buffer is flushed and all references to the allocation are dropped. The deliberate trade-off – wasted but not leaked – was worth making, and a comment in the code explains the reasoning for whoever reads it next.

Each bug effectively had the same shape: a subtle assumption about stream state that held in the original single-mode code silently broke in mixed-mode scenarios. The invariant test exposed the bugs by providing a minimal reproducible case and a clear description of which invariant was violated. Plus, it also made each one straightforward to reason about and fix.

The Result

The work touches tests and implementation in roughly equal measure, which feels about right for a change like this. The test suite grew from a handful of hand-crafted cases into an exhaustive invariant-based framework that covers all combinations of chunk sizes and write types – something that would have been impractical to write by hand. On the implementation side, the long-standing restriction on mixed-mode writes is gone. Buffered and zero-copy writes can now be freely interleaved in any order, with the stream handling the transitions internally. This preserves ordering and the chunk-size invariants that sinks depend on.

In general, writing a test that covers as many possible situations as possible and then making sure that the code passes those tests is a very good approach. It makes sure the end code is correct. In rare cases when the test covers all possible situations the code may have to deal with, we can say that “the code is officially bug free.” Making AI facilitate testing turned out to be the best decision made in this work. Given the amount of test cases and the number of possible combinations of input_stream inner states, debugging each failing test case would be a nightmare for the developer.