Why Scheduling at All?

Scheduling requests of any kind always serves one purpose — gain control over priorities of those requests. In the priorities-less system one doesn’t need to schedule; just putting whatever arrives into the queue and waiting when it finishes is enough. When serving IO requests in ScyllaDB we cannot afford to just throw those requests into the disk and wait for them to complete. In ScyllaDB different types of IO flows have different priorities. For example, reading from disk to respond to a user query is likely a ”synchronous” operation in the sense that a client really waits for it to happen, even though the CPU is most likely busy with something else. In this case if there’s some IO running at the time the query request comes in ScyllaDB must do its best to let the query request get served in a timely manner even if this means submitting it into the disk ahead of something else. Generally speaking we can say that OLTP workloads are synchronous in the aforementioned sense and are thus latency-sensitive. This is somewhat opposite to OLAP workloads, which can tolerate higher latency as long as they get sufficient throughput.

Seastar’s IO Scheduler

ScyllaDB implements its IO scheduler as a part of the Seastar framework library. When submitted, an IO request is passed into the seastar IO scheduler, where it finds its place in one of several queues and eventually gets dispatched into the disk.

Well, not exactly into the disk. ScyllaDB does its IO over files that reside on a filesystem. So when we say “request is sent into a disk” we really mean here that the request is sent into the Linux kernel AIO, then it gets into a filesystem, then to the Linux kernel IO-scheduler and only then — to the disk.

The scheduler’s goal is to make sure that requests are served in a timely manner according to the assigned priorities. To maintain the fairness between priorities IO scheduler maintains a set of request queues. When a request arrives the target queue is selected based on the request priority and later, when dispatching, the scheduler uses a virtual-runtime-like algorithm to balance between queues (read — priorities), but this topic is beyond the scope of this blog post.

The critical parameter of the scheduler is called “latency goal.” This is the time period after which the disk is guaranteed to have processed all the requests submitted so far, so the new request, if it arrives, can be dispatched right at once and, in turn, be completed not later than after the “latency goal” time elapses. To make this work the scheduler tries to predict how much data can be put into the disk so that it manages to complete them all within the latency goal. Note that meeting the latency goal does not mean that requests are not queued somewhere after dispatch. In fact, modern disks are so fast that the scheduler does dispatch more requests than the disk can handle without queuing. Still the total execution time (including the time spent in the internal queue) is small enough not to violate the latency goal.

The above prediction is based on the disk model that’s wired into the scheduler’s brains, and the model uses a set of measurable disk characteristics. Modeling the disk is hard and a 100% precise model is impossible, since disks, as we’ve learned, are always very surprising.

The Foundations of IO

Most likely when choosing a disk one would be looking at its 4 parameters — read/write IOPS and read/write throughput (in Gbps). Comparing these numbers to one another is a popular way of claiming one disk is better than the other and in most of the cases real disk behavior meets the user expectations based on these numbers. Applying Little’s Law here makes it clear that the “latency goal” can be achieved at a certain level of concurrency (i.e. — the number of requests put in disk altogether) and all the scheduler needs to do its job is to stop dispatching at some level of in-disk concurrency.

Actually it may happen that the latency goal is violated once even a single request is dispatched. With that the scheduler should stop dispatching before it submits this single request, which in turn means that no IO should ever happen. Fortunately this can be observed only on vintage spinning disks that may impose milliseconds-scale overhead per request. ScyllaDB can work with these disks too, but the user’s latency expectation must be greatly relaxed.

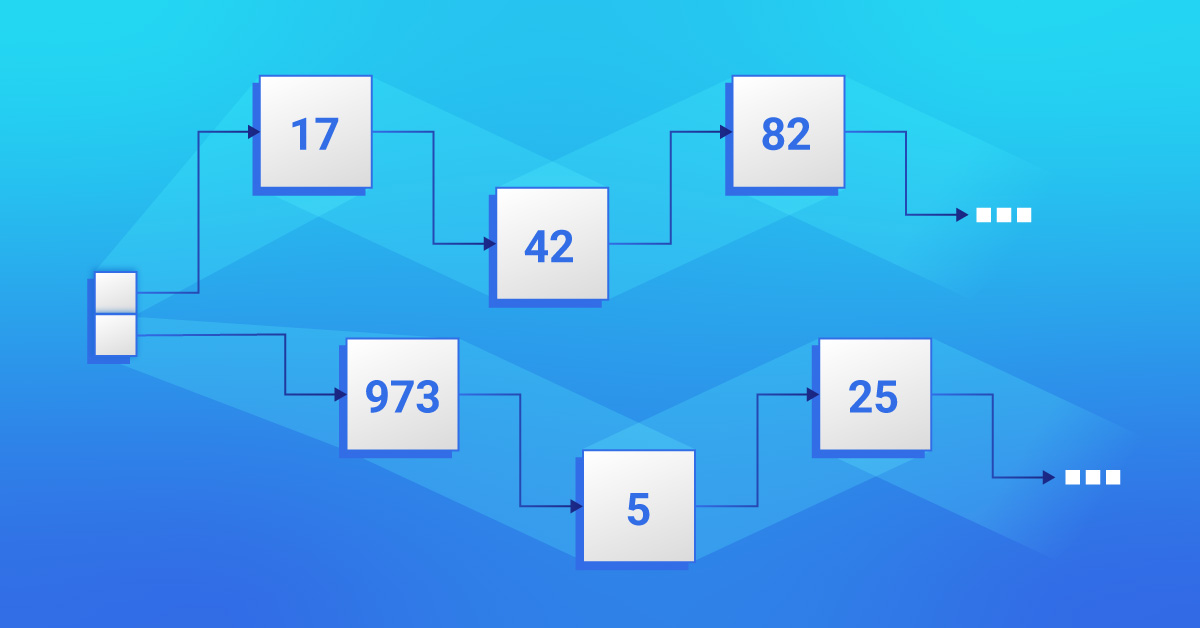

Share Almost Nothing

Let’s get back for a while to the “feed the disk with as many requests as it can process in ‘latency goal’ time” concept and throw some numbers into the game. The latency goal is the value of a millisecond’s magnitude, the default goal is 0.5ms. An average disk doing 1GB/s is capable of processing 500kB during this time frame. Given a system of 20 shards each gets 25kB to dispatch in one tick. This value is in fact quite low. Partially because ScyllaDB would need too many requests to work and thus it would be noticeable overhead, but the main reason is that disks often require much larger requests to work at their maximum bandwidth. For example, the NVMe disks that are used by AWS instances might need 64k requests to get to the peak bandwidth. Using 25k requests will give you ~80% of the bandwidth even if exploiting high concurrency.

This simple math shows that Seastar’s “shared nothing” approach doesn’t work well when it comes to disks, so shards must communicate when dispatching requests. In the old days ScyllaDB came with the concept of IO coordinator shards; later this was changed to the IO-groups.

Why iotune?

When deciding whether or not to dispatch a request, the scheduler always asks itself — if I submit the next request, will it make the in-disk concurrency high enough so that it fails the latency goal contract or not? Answering this question, in turn, depends on the disk model that sits in the scheduler’s brain. This model can be evaluated in two ways — ashore or on the fly (or the combination of these two).

Doing it on the fly is quite challenging. Disk, surprisingly as it can be, is not deterministic and its performance characteristics change while it works. Even such a simple number as “bandwidth” doesn’t have a definite fixed value, even if we apply statistical errors to our measurement. The same disk can show different read speeds depending on if it’s in so-called burst mode or if the load is sustained, if it’s a read or write (or mixed) IO, it’s heavily affected by the disk usage history, air temperature in the server room and tons of other factors. Trying to estimate this model runtime can be extremely difficult.

Contrary to this, ScyllaDB measures disk performance in advance with the help of a tool called iotune. This tool literally measures a bunch of parameters the disk has and saves the result in a file we call “IO properties.” Then the numbers are loaded by Seastar on start and are then fed into the IO scheduler configuration. The scheduler thus has the 4-dimensional “capacity” space at hand and is allowed to operate inside a sub-area in it. The area is defined by 4 limits on each of the axes and the scheduler must make sure it doesn’t leave this area in a mathematical sense when submitting requests. But really these 4 points are not enough. Not only the scheduler needs a more elaborated configuration of the mentioned “safe area,” but also must handle the requests’ lengths carefully.

Pure Workloads

First, let’s see how disks behave if being fed with what we call “pure” loads, i.e. with only reads or only writes. If one divides maximum disk bandwidth on its maximum IOPS rate, the obtained number would be some request size. If heavily loading the disk with requests smaller than that size, the disk will be saturated by IOPS and its bandwidth will be underutilized. If using requests larger than that threshold, the disk will be saturated by bandwidth and its IOPS capacity will be underutilized. But are all “large” requests good enough to utilize the disk’s full bandwidth? Our experiments show that some disks show notably different bandwidth values when using, for example, 64k requests vs using 512k requests (of course, the larger request size is the larger the bandwidth is). So to get the maximum bandwidth from the disk one needs to use larger requests and vice versa — if using smaller requests one would never get the peak bandwidth from the disk even if the IOPS limit would still not be hit. Fortunately, there’s an upper limit on the request size above which the throughput will no longer grow. We call this limit a “saturation length.”

This observation has two consequences. First, the saturation length can be measured by iotune and, if so, it is later advertised by the scheduler as the IO size that subsystems should use if they want to obtain the maximum throughput from the disk. The SSTables management code uses buffers of that length to read and write SSTables.

This advertised request size, however, shouldn’t be too big. It must still be smaller than the largest one with which the disk still meets the latency goal. These two requirements — to be large enough to saturate the bandwidth and small enough to meet the latency goal — may be “out of sync”, i.e. the latter one may be lower than the former. We’ve met such disks, for those the user will need to choose between latency and throughput. Otherwise he will be able to enjoy both (provided other circumstances are favored).

The second consequence is that if the scheduler sees medium-sized requests coming in it must dispatch fewer data than it would if the requests had been larger. This is because effectively disk bandwidth would be below the peak and, respectively, the latency goal requirement won’t be met. Newer Seastar models this behavior with the help of a staircase function which seems to be both — good approximation and not too many configuration parameters to maintain.

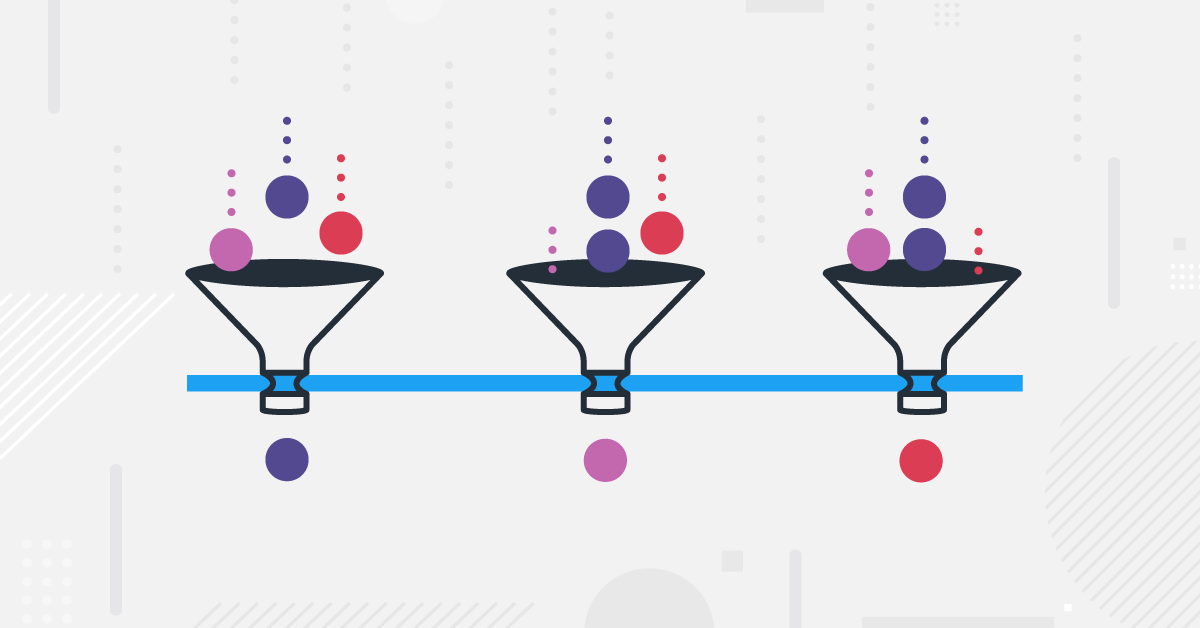

Mixed Workloads

The next dimension of complexity comes with what we call “mixed workloads.” This is when the disk has to execute both reads and writes at the same time. In this case both the total throughput and the IOPS will be different from what one would get if we calculated a linear ratio between the inputs. This difference is two-fold.

First, read flows and write flows get smaller in a different manner. Let’s take a disk that can run 1GB/s of reads or 500MB/s of writes. It’s no surprise that disks write slower than they read. Now let’s try to saturate the disk with two equal unbounded read and write flows. What output bandwidth would we see? The linear ratio makes us think that each flow would get its half, i.e. reads would show 500MB/s and writes would get 250MB/s. In reality the result will differ between disk models and the common case seems to be that writes would always feel much better than reads. For example we may see an equal rate of 200MB/s for both flows, which is 80% for write and only 40% for read. Or, in the worst (or maybe the best) case, writes can continue working at peak bandwidth while reads would have to be content with the remaining percents.

Second, this inhibition greatly depends on the request sizes used. For example, when a saturated read flow is disturbed with a one-at-a-time write request the read throughput may become 2 times lower for small-sized writes or 10 times lower for large-sized writes. This observation imposes yet another limitation on the maximum IO length that the scheduler advertises to the system. When configured the scheduler additionally limits the maximum write request length so that it will have a chance to dispatch mixed workload and still stay within the latency goal.

Unstable Workloads

If digging deeper we’ll see that there are actually two times more speed numbers for a disk. Each speed characteristic can in fact be measured in two modes — bursted or sustained. EBS disks are even explicitly documented to work this way. This surprise is often the first thing a disk benchmark measures — the documented (in ads) disk throughput is often the “bursted” one, i.e. the peak bandwidth the disk dies would show if being measured in 100% refined circumstances. But once the workload lasts longer than a few seconds or becomes “random” there starts a background activity inside the disk and the resulting speed drops. So when benchmarking the disk it’s often said that one must clearly distinguish between short and sustained workloads and mention which one was used in the test.

The iotune, by the way, measures the sustained parameters, mostly because scylla doesn’t expect to exploit burtsable mode, partially because it’s hard to pin this “burst.”

View the Full Agenda

To see the full breadth of speakers at P99 CONF, check out the published agenda, and register now to reserve your seat. P99 CONF will be held October 6 and 7, 2021, as a free online virtual conference. Register today to attend!