Latency FAQs

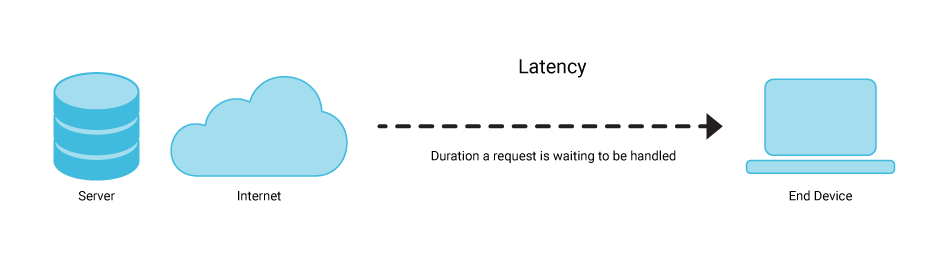

What is Latency in Networking?

Latency is the delay that occurs between a user’s action on a web application or network and the response they receive. Another latency definition is the total round trip time required for a packet of data to travel.

Latency usually occurs due to the distance between users and key network elements, including the internet or a privately managed wide-area network (WAN) and the user’s internal local-area network (LAN).

For example, as the user takes an action on their device, perhaps shopping for an item in an online store and buying it, several steps must happen before they see their request answered.

The user adds the item to their cart and the browser sends a request to the site’s servers that stores the data; this demands a certain amount of time, which depends on how much information is transmitted. The site server receives the request, and the first part of the latency cycle is complete.

The server then accepts or rejects the request and processes the data, each step also taking a certain amount of time, depending on the amount of data and the server’s capabilities. The site server replies with the necessary purchasing information to the user, and the user’s browser receives the request, and the product enters the cart, completing the latency cycle.

The total latency resulting from the request is the total of all the increments of time, from when the user first clicks on the “add” button to when they see it in the cart.

What is Latency in a Database?

Simply put, latency in a database is the total amount of time needed for the database to receive a request, process the transaction underlying the request, and respond correctly. In the case of the shopping cart example above, product information is liley stored in a NoSQL database. The ability to retrieve that information quickly has to do with database latency.

In databases, a delay occurs in reading and writing to and from different blocks of memory. Processors take time to identify the exact location for data. Intermediary devices, including switches, routers, and proxies, can also add to overall latency.

What is Data Latency?

Data latency is the time data packets take to be stored and retrieved. In business intelligence (BI), it is critical to reduce data latency to respond to changing market conditions rapidly and remain agile. Reducing data latency allows for more rapid business decisions with real-time data that can answer specific business questions.

What is Long Tail Latency?

Latency is typically calculated in the 50th, 90th, 95th, and 99th percentiles. These are commonly referred to as p50, p90, p95, and p99. Percentile-based metrics can be used to expose the outliers that constitute the ‘long-tail’ of performance. Measurements of 99th (“p99”) and above are considered measurements of ‘long-tail latency.

What are the Causes of Long Tail Latency?

Long-tail latency has many causes, but, significantly, it is not usually caused by application-specific problems or normal network lag. Instead, long-tail latency has systemic causes; it is usually caused by glitches in the underlying infrastructure. These glitches can include pauses due to JVM ‘garbage collection’, hypervisor interrupts, context switches, database repair, cache flushes, and so on. This makes long-tail latency very tricky to diagnose and fix, as it’s often a ‘whack-a-mole’ exercise.

Network Bottlenecks

Long-tail latency is mainly caused by network bottlenecks. IT commonly invests heavily in the best components available: great solid state drives (SSDs, and associated NVMes), CPUs, and memory. But they often shoot themselves in the foot by configuring a ‘narrow’ network. The result is that the highly performant hardware is bottlenecked by the network. It’s also common that SSD settings are misconfigured. Cloud SSDs have two associated throttles: burst and sustained. Background operations such as streaming and compaction can chew up the sustained throughput, which may be 1/10 of burst. While the backend process hogs I/O, there is no capacity left for bursts.

Speed Mismatch

As far as databases are concerned, there are two speeds that have to work together. The first is the combined speed of CPU and memory speed; the other is disk speed. CPU and memory tends to be faster, while the disk tends to be slower. This equation is evolving as SSD manufacturers are catching up to CPU improvements, but it is still often the case.

Speed mismatch is more commonly a problem with write workloads that are bounded by the disk. This can happen either because the disk is slow, or because the payloads are large. Large payloads shift the bottleneck to the disk. When the time comes to write to disk, any relative disk slowness will cause queries to back up in the buffer. This will result in outlier p99 latencies.

Exceeding the System’s Latency Budget

Every system is designed with a ‘latency budget’. In fact, you can view p95 and p99 latency as a function of the throughput that a given system is designed to support. The ‘budget’ itself is the ratio of latency to throughput.

Systems that handle big data have primary and secondary considerations. These include storage, CPU cores, memory (RAM), and network interfaces. Not only do you need to size your disks for basic data, you also need to calculate total storage needed based on your replication factor, as well as overhead to allow for compactions.

Modern servers running on Intel Xeon Scalable Processor Platinum chips are common across AWS, Azure, and Google Cloud. Server needs are based upon how much throughput each of these multicore chips can support.

These are all components that define a system’s latency budget. It’s important to understand and stay within those limitations. When they are exceeded, outlier latencies are virtually assured to occur.

What are the Effects of Long Tail Latency?

Some web pages can generate well over 100 HTTP calls to various services. Many of those services run on multi-tier infrastructure. A hiccup at any layer can create a latency outlier, resulting in a poor experience for a user. A very active backend service that is affected by long-tail latencies can have a serious site-wide impact.

Latency vs Response Time

The difference between latency and response time is that while latency refers to a delay in the system, response time includes both the actual processing time and any delays. In general, latency is simply the travel time, not the processing time, which is why distance is such an important factor. Response time takes the perspective of a client application and measures the end-to-end network transaction.

How to Improve Latency

There are a number of guidelines that your organization can follow to improve latency.

- Design a system that ensures p99 latency based on your organization’s throughput targets.

- Do not ignore performance outliers. Embrace them and use them to optimize system performance.

- Minimize the possibility of outliers by minimizing infrastructure. For a start, avoid external database caches. Shrink database clusters by scaling vertically on more powerful hardware.

- Adopt database infrastructure that can make optimal use of powerful hardware.

- Adopt a masterless database architecture.

- Minimize the vectors needed to resize the system. Ideally, limit vectors to system resources, such as CPU cores.

- Avoid databases that add complexity. Look for databases with a close-to-the hardware architecture.

- Take into account the price-performance of your database. You do not need to break your IT budget to achieve real-time long-tail performance.

Is ScyllaDB a Low Latency Database?

ScyllaDB is an open source, cloud (DBaaS), and enterprise NoSQL database known for low latency and high performance. With a highly scalable and easy-to-use architecture, ScyllaDB provides an always-on approach that generates ten times higher throughput with low and consistent latency [see benchmarks].

ScyllaDB’s ability to tame long-tail latencies has led to its adoption in industries such as media, cybersecurity, the industrial Internet of Things (IIoT), AdTech, retail, and social media.

ScyllaDB is built on an advanced, open-source C++ framework for high-performance server applications on modern hardware. The team that invented ScyllaDB has deep roots in low-level kernel programming. They created the KVM hypervisor, which now powers Google, AWS, OpenStack, and many other public clouds.

Modern hardware is capable of performing millions of I/O operations per second (IOPS). ScyllaDB’s asynchronous architecture takes advantage of this capability to minimize p99 latencies. ScyllaDB is uniquely able to support petabyte-scale workloads, millions of IOPs, and P99 latencies <10 msec while also significantly reducing total cost of ownership.

Over 400 game-changing companies like Disney+ Hotstar, Expedia, Discord, Trellix, Crypto.com, Zillow, Starbucks, Comcast, and Samsung use ScyllaDB for their toughest database challenges.

Does ScyllaDB Offer Additional Resources for People Interested in Low Latency?

ScyllaDB hosts the annual P99 CONF conference, where engineers from top companies share how they are solving their toughest high-performance, low-latency application challenges. The conference is free and virtual. Watch existing sessions on-demand or get details about the next P99 CONF at https://www.p99conf.io/