As we prepare for ScyllaDB Summit 2019, we are producing a series of blogs highlighting this year’s featured presenters. And a reminder, if you’re not yet registered for ScyllaDB Summit, please take the time to register now!

Today we are speaking with Daniel Barsky, Senior Data Scientist at Augury. His presentation at ScyllaDB Summit 2019 is entitled Augury: Real-Time Insights for the Industrial IoT.

When many people think of Machine Learning (ML) they’ll immediately presume you’re running on GPUs. But you are training your algorithms against ScyllaDB, which runs on CPUs. What led you to this configuration?

ScyllaDB offers us capabilities beyond training ML models. While the models themselves might be trained on either CPU or GPU, being able to generate the features in a streaming setting, storing them and retrieving them (including extensive history of the feature values) in a streaming setting was a use case we require ScyllaDB for.

When we are training ML models, we would typically have a preliminary dataset creation step (which we will then use in training), which uses the fact that ScyllaDB works well with parallel processing systems such as Apache Spark.

For those who are familiar already with the Augury use case for ScyllaDB, what new information will you be presenting, or presenting in more depth, for ScyllaDB Summit 2019?

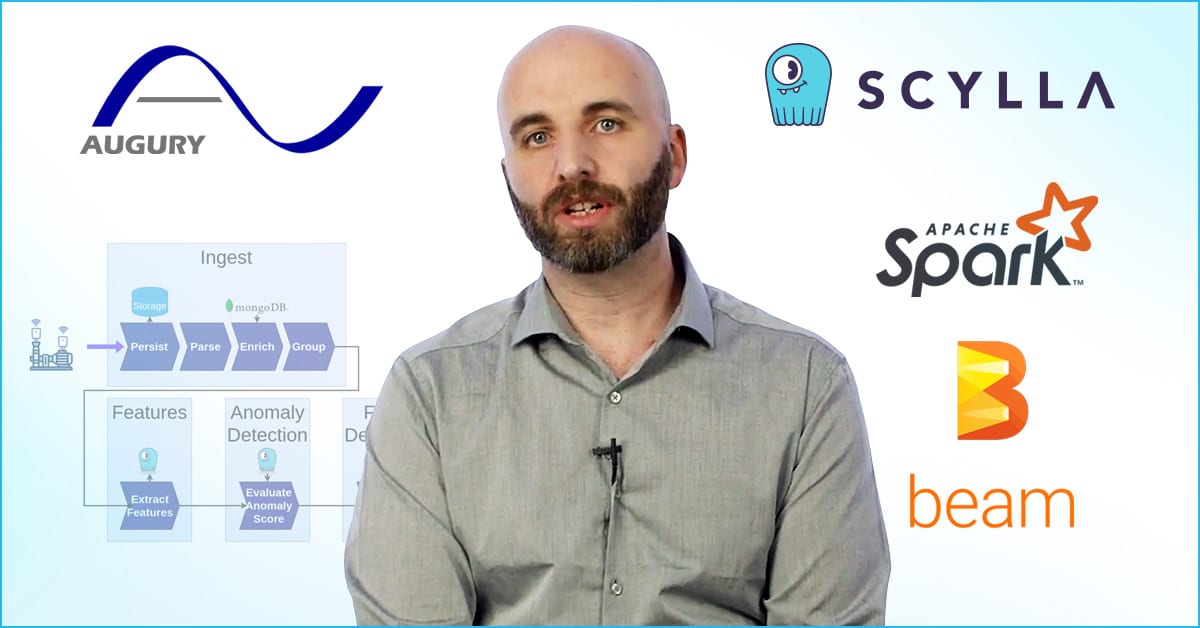

I will go more in depth into the specifics of our solution – cluster deployment and architecture, data modelling, etc. In addition, I will briefly present our next-generation data processing architecture, based on streaming pipelines, and will show how ScyllaDB fits into that architecture.

You’re doing a lot of time-series data work at Augury. You could have built your solution with a time-series database like KairosDB, that runs on top of ScyllaDB, or with a pure-play time-series system like InfluxDB. Why did you decide to implement ScyllaDB?

We’ve had some prior experience with time-series DBs in the context of monitoring, and more specifically, tracking IoT related metrics. Unfortunately, pure time-series DBs weren’t a good fit for us for a couple of reasons. First off, they’re built to model a single time-series variable at a time, and don’t really offer the ability to store and correlate high-dimensional time series data. In addition, adding a new variable to an already existing multivariate time series was a basic requirement for us which time series DBs rarely support, but ScyllaDB does. Finally, we wanted to be able to access the data stored in the DB in a fast and parallelized way (e.g. using Apache Spark), and most time series DBs we looked at didn’t really offer this functionality at the time – if there was a Spark connector, it would communicate with the underlying infrastructure rather than the time series DB itself.

False positives on IIoT diagnostics can lead to false alarms and undue concerns from the client’s point-of-view. It also drives up costs through premature service dispatching. But failing to flag an issue correctly can lead to equipment damage and even catastrophic loss. How does Augury appropriately balance between being over- or under-sensitive to the warnings, triggers and alarms you receive, process and manage?

Augury exists to make sure that people can rely on the machines that matter to them, so we see ourselves as “machine doctors” to some extent – we will always try to catch every potential machine failure before it happens, at the expense of false alarms. We have multiple mechanisms in place to handle false alarms in a way that gives our customers a good experience, such as layered detectors (some general, some more specific etc.), and such as a “second opinion” process for low-confidence detections.

I hear you’re busy at work on a new next-gen architecture. While you’ll reveal more at the ScyllaDB Summit in full, did you wish to tease us with any particular buzzwords or concepts to get excited about?

Augury is monitoring more machines than ever before, and the number is increasing very fast. To be able to support the rates of data processing we will need in the short and long term, we’re making the change to a streaming pipelines based architecture, using Apache Beam as the engine behind it. This means that instead of a centralized microservice handling the data processing, multiple stages of the pipeline enrich the data and hand it off to one another, until the desired insights are created and routed to where they need to go. This has implications regarding outgoing access (such as DB access), but in my talk I will show how ScyllaDB plays nicely with this architecture for us.

What sort of hobbies or interests do you have, or little known facts about you that you’d like to share with our audience?

Augury was such a good fit for me because IoT, smart homes and home automation has been a passion of mine for some time. In addition to Big Data, I’m always trying to automate my home and work environments, for example by automating the parking garage gate to recognize employee license plates or integrating the hot water heater to the office Slack.

Thanks for taking the time to talk with me today, Daniel. I’m sure our users will be eager to attend your session to find out more in depth!